Your Best Employee is Your Biggest Security Risk (And They Use ChatGPT)

When productivity bypasses the perimeter. Analyzing the data leakage, governance gaps, and prompt injection risks of unvetted AI integration.

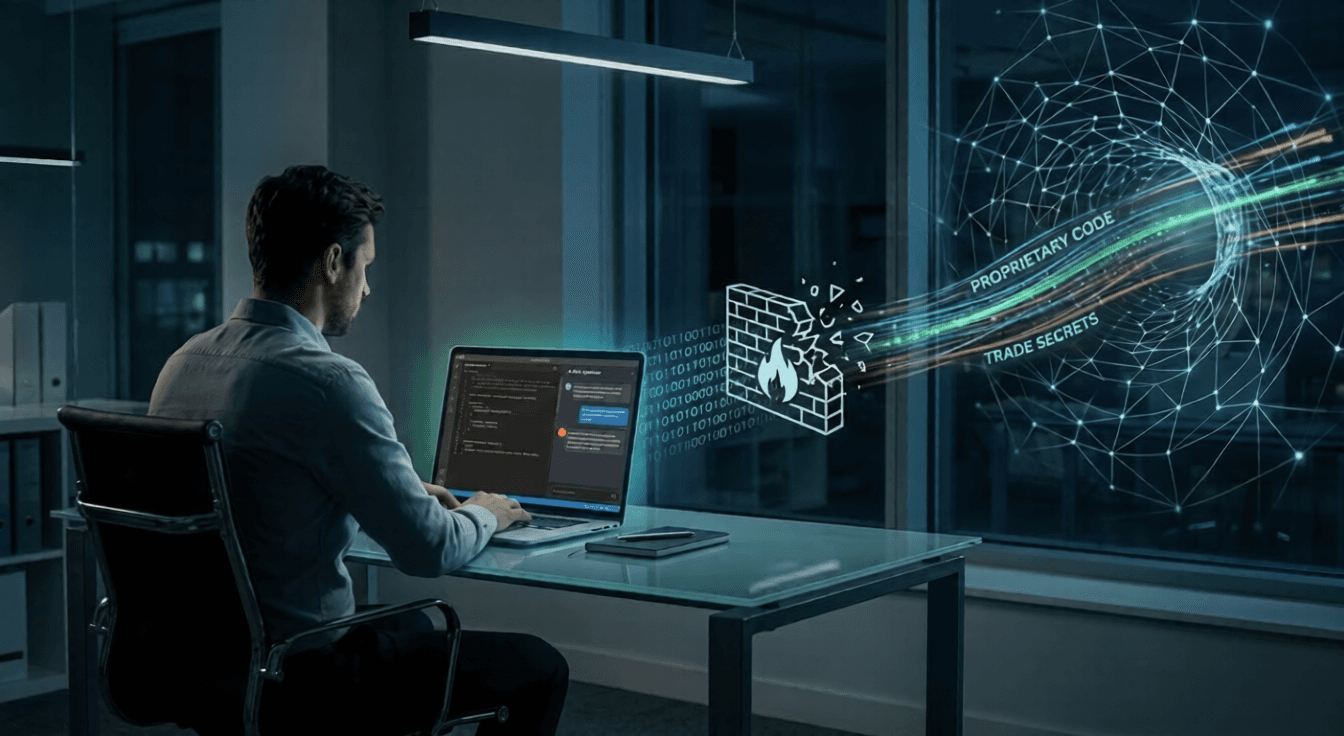

The perimeter didn’t break; it dissolved. When your lead dev pastes 5,000 lines of proprietary code into a ‘Code Optimizer’ AI, your firewall becomes a decoration. Welcome to the era of Shadow AI.

Originally published at Medium

Let’s be honest. We’re all trying to move faster.

The pressure to deliver more, better, and quicker is intense. If you’re a developer trying to squash a bug, a marketer trying to generate 50 headlines, or a data analyst trying to make sense of a massive spreadsheet, you want immediate help.

And help is available. It’s free, it’s instant, and it’s incredibly capable.

It’s ChatGPT. It’s Claude. It’s that neat “AI Chrome Extension” that just appeared.

But here is the hard truth that keeps CISOs awake at night: The most productive, proactive employees in your company are currently your biggest security threat.

They aren’t malicious. They love your company. They just want to do their jobs well. And in their haste to be efficient, they are inadvertently opening the back door and handing over the keys to the kingdom.

Welcome to the pervasive, dangerous world of Shadow AI.

The Road to Hell is Paved with Productivity

We used to worry about “Shadow IT” — employees using Dropbox instead of the approved company share, or setting up their own Trello boards.

Shadow AI is Shadow IT on steroids, armed with the ability to ingest and process proprietary information.

When an employee uses an unvetted, public AI tool to “help” with their work, they are taking sensitive company data and pasting it into a third-party system. A system that, by design, usually uses that data to train its next iteration.

Your competitive advantage just became public training data.

The Anatomy of the Leak

Let’s look at a few all-too-common scenarios:

The Code Leak: Your brilliant lead developer is stuck on a complex function. To save hours of debugging, they paste those 5,000 lines of complex, proprietary algorithm code into a public AI tool with the prompt: “Optimize this and check for bugs.” The AI helps. The code is fixed. But that algorithm — the secret sauce of your product — now resides in a public model’s training set. It could potentially be reconstructed or referenced when a competitor prompts the same model weeks later.

The Strategy Leak: A Product Manager pastes the transcripts of confidential user interviews and a rough draft of the upcoming product roadmap into an AI to “summarize the key pain points.” The summary is great. But your Q3 strategy is now out of your control.

The Legal Leak: A HR representative pastes a sensitive, non-finalized separation agreement for a C-suite executive into an AI to “simplify the language.” PII (Personally Identifiable Information) and confidential legal terms are now compromised.

Press enter or click to view image in full size

The Rising Danger: Beyond Simple Leaks

While data leakage is the most immediate threat, the Shadow AI ecosystem introduces other sinister risks that teams are ill-equipped to handle. We are seeing a massive Governance Gap between how fast employees are adopting these tools and how slowly companies are creating policies for them.

When employees use unvetted AI tools or plugins, they also expose the company to:

Prompt Injection Attacks: An employee might install a shady “AI Writing Assistant” Chrome extension. A malicious actor could use prompt injection — crafting specific inputs that trick the AI tool into executing malicious commands, stealing the data the employee provides, or acting as a beachhead inside the browser.

Data Poisoning: While more abstract, if employees rely on results from models that have suffered Data Poisoning (where an attacker has intentionally manipulated the training data), they could be making critical business decisions based on flawed, biased, or intentionally incorrect information, which they believe is objective AI wisdom.

Press enter or click to view image in full size

Closing the Gap: From “No” to “How”

You cannot solve the Shadow AI problem by banning AI. Total bans do not work; they just force users further into the shadows. People will use these tools because they provide undeniable value.

The solution is not strict prohibition, but smart, guided adoption.

Acknowledge the Governance Gap: Stop pretending it isn’t happening. Assume your employees are already using these tools. Conduct surveys. Check network logs for traffic to known AI domains.

Educate, Don’t Threaten: Most employees honestly do not understand how LLMs (Large Language Models) work. They don’t realize that “pasting” is “sharing.” Run workshops explaining the lifecycle of data within public models.

Provide Sanctioned Alternatives: If the need is real (and it is), meet it. Provide enterprise-grade AI tools (like ChatGPT Enterprise, Microsoft Copilot, or private Azure OpenAI instances) where you have data processing agreements that guarantee company data is not used to train the public models.

Create a Clear Policy: Your AI policy shouldn’t be 50 pages of legalese. It should be simple: “Never put customer PII, internal financials, or source code into a public AI tool. Here is the list of approved enterprise tools you SHOULD use instead.”

The Balance

We are in the middle of a productivity revolution. AI is the most potent tool for efficiency we have ever seen.

The goal isn’t to scare your employees away from innovation. Your company must use AI to remain competitive.

But innovation without security is just a slow-motion disaster. It’s time to bring AI out of the shadows, give your team the proper tools, and ensure that your best employee’s efficiency doesn’t become your company’s undoing.

Let’s Connect and Keep the Conversation Going

I write frequently about cyber security, the evolving tech landscape, and practical AI implementation. I’d love to hear your thoughts on how your organization is handling Shadow AI.

You can find me across the web here:

✍️ Read more on Medium: @syedahmershah

💬 Join the discussion on Dev.to: @syedahmershah

🧠 Deep dives on Hashnode: @syedahmershah

💻 Check my code on GitHub: @ahmershahdev

🔗 Connect professionally on LinkedIn: Syed Ahmer Shah

🧭 All my links in one place on Beacons: Syed Ahmer Shah

🌐 Visit my Portfolio Website: ahmershah.dev

You can also find my verified Google Business profile here.